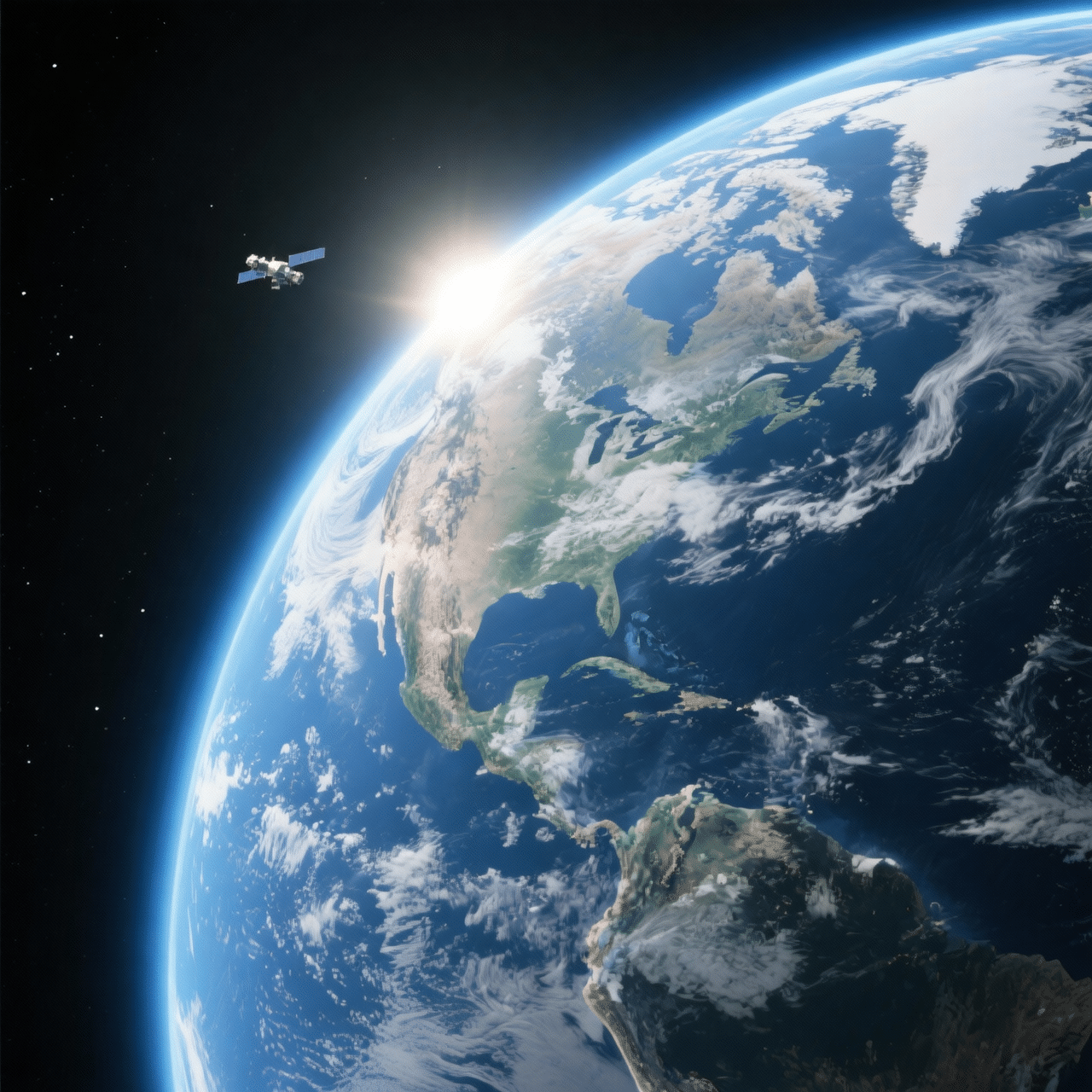

Advanced image generation model focused on photorealism, natural detail, and accurate visual behavior. Goes beyond prompts, understands context, lighting, materials, and spatial relationships. Combines strong rendering with precise control to create believable, production-ready visuals. Ideal for product imagery, lifestyle content, and realistic scenes where detail matters.

Access and run models in one Phygital+ workspace, without

juggling multiple tools and accounts.

100+ teams use Phygital+

Phygital+ isn’t just a platform — it’s a hands-on partnership. We guide your team through every step of adopting AI in real workflows.

Generate new visuals and refine existing images with controlled changes.

Built for production assets where layout and clarity matter.

Cleaner typography for headlines, labels, and CTAs.

Change details without breaking lighting, framing, or style.

Task & What FLUX2 delivers

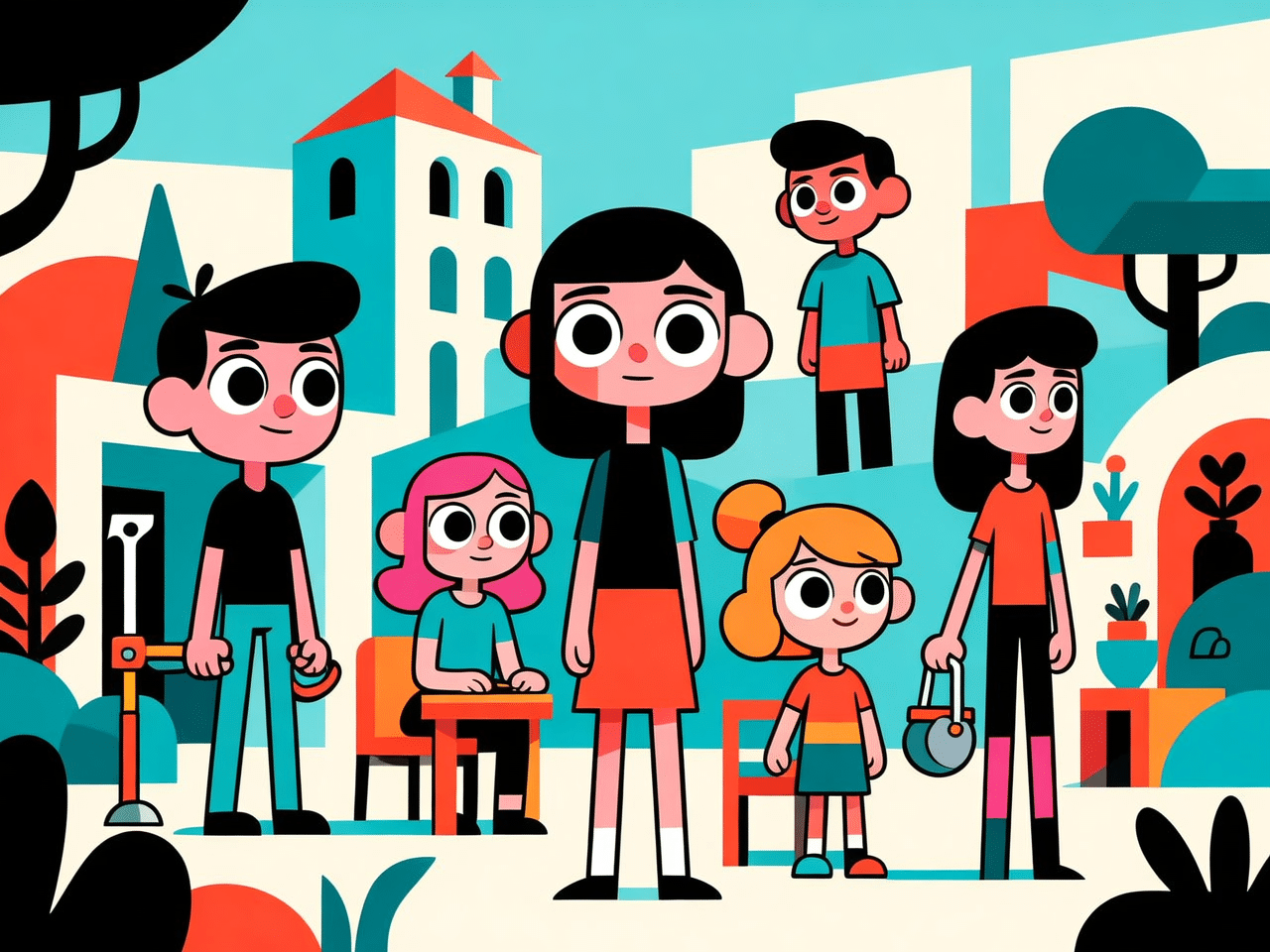

Consistent artistic style across characters, environments, and props

Clean product rendering with accurate

color and material detail

Text in images rendered correctly across Latin, Cyrillic, CJK, and other scripts

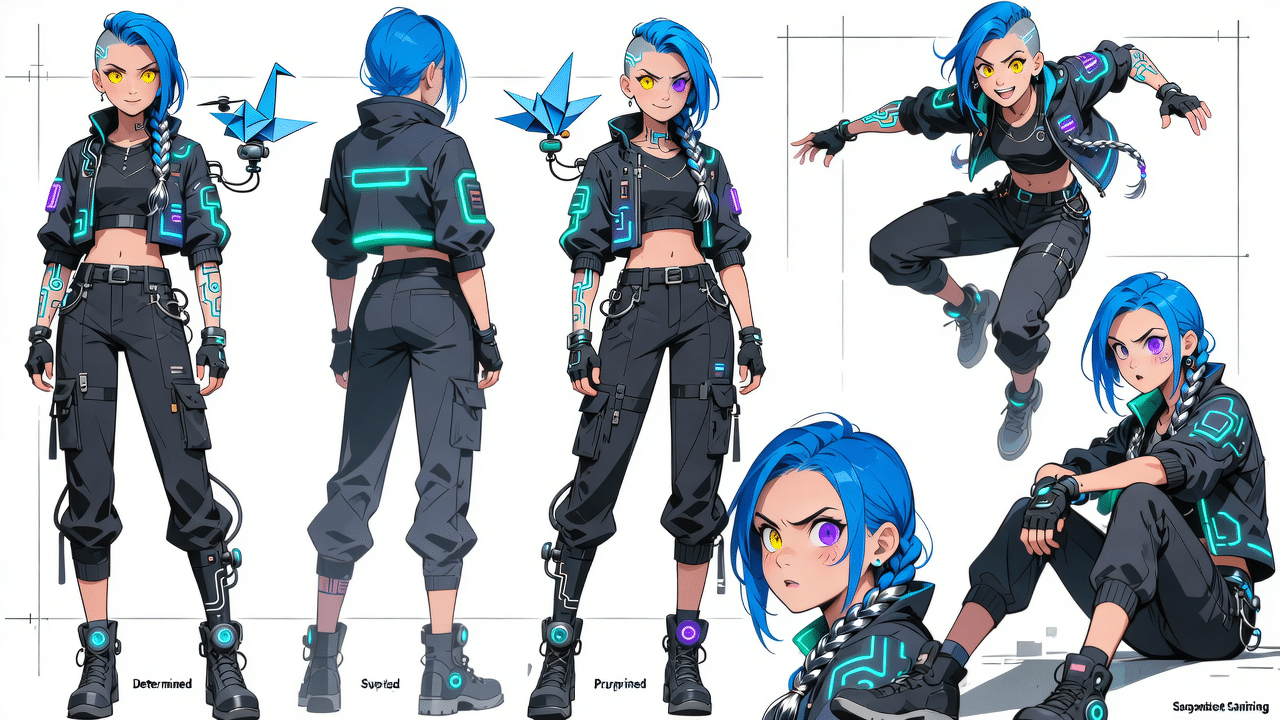

Detailed character sheets with consistent appearance across poses

Environments and settings built from

short descriptive prompts

Visual tone and cultural context adapted

to non-Western markets

Upload an existing image to edit or generate a new one using a prompt.

Define the scene, lighting, and materials — WAN Image understands context and visual relationships.

Refine outputs quickly, adjust details, and export production-ready visuals.

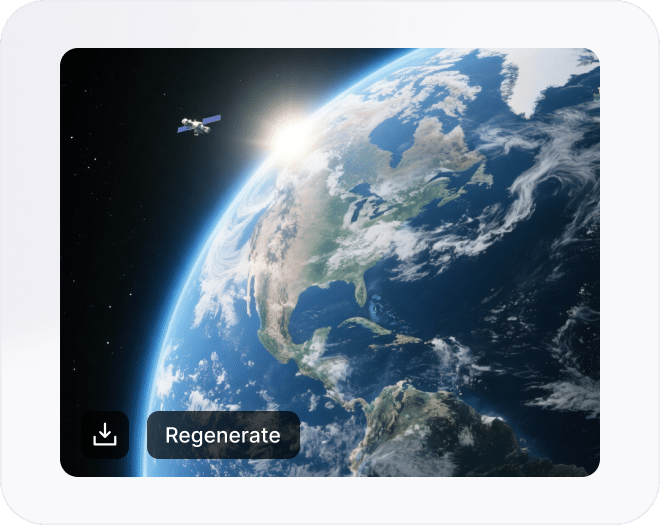

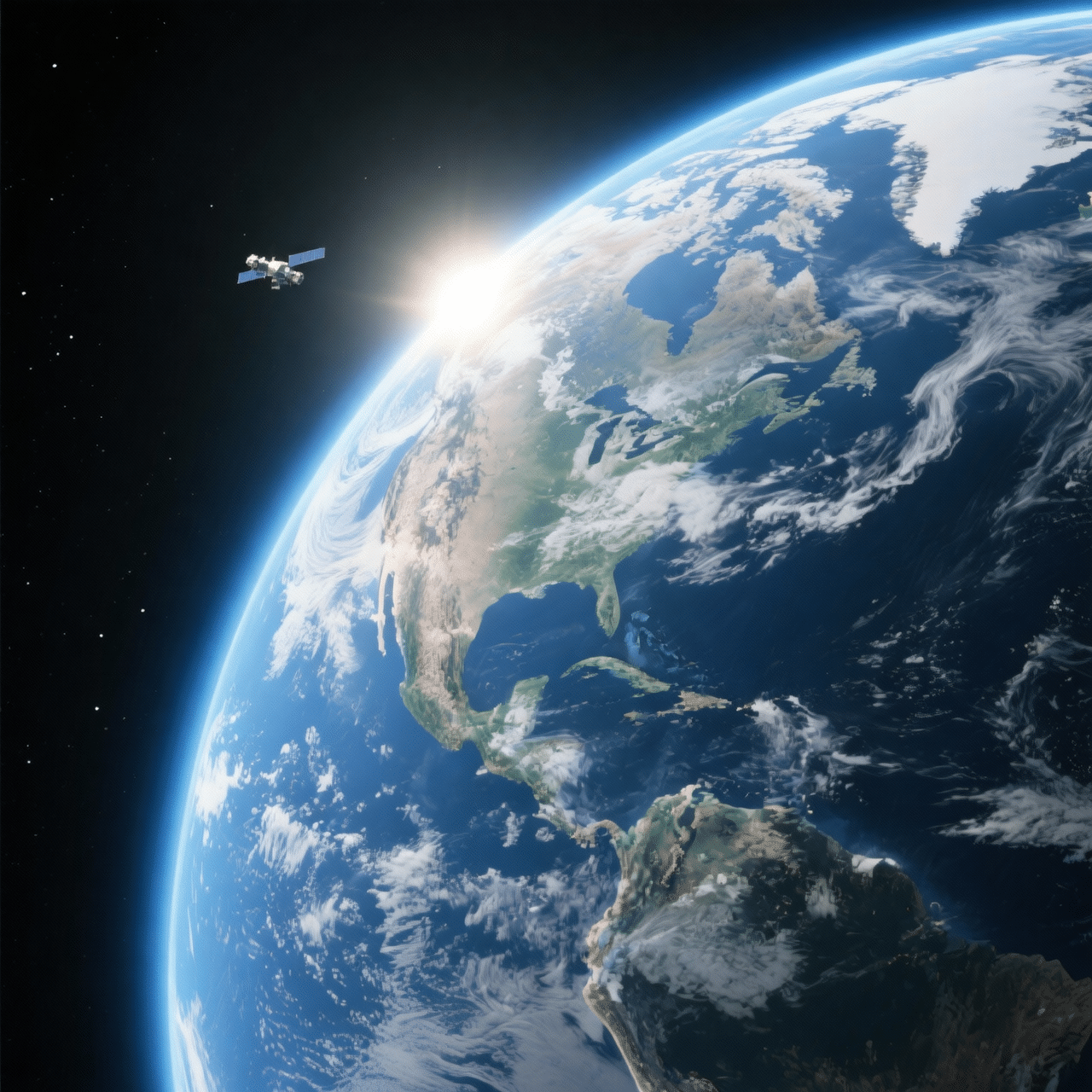

Explore real examples created with WAN Image. Each visual includes the final result and prompt — copy it, generate your own version, then refine it to fit your product, brand, or scene.

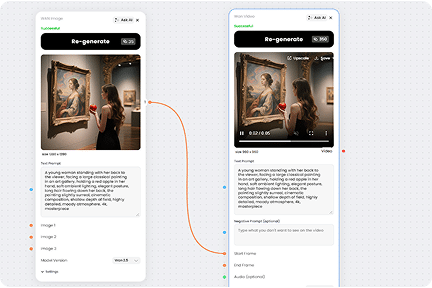

Most image generators are disconnected from video and editing tools.

On Phygital+, Wan Image is part of a continuous pipeline:

Animate it directly with Wan Video using the same model family

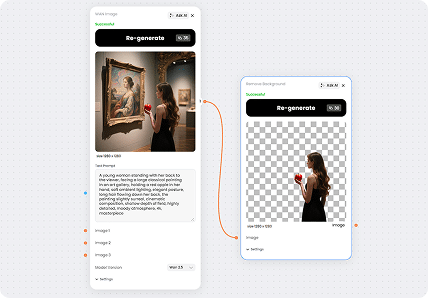

Remove or swap the background with Remove Background

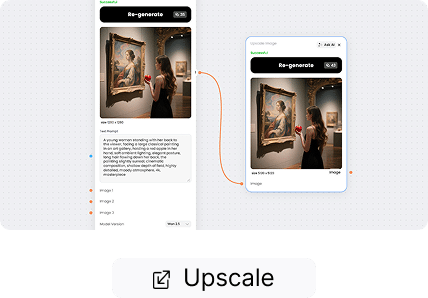

Send to Upscale Image without leaving the platform

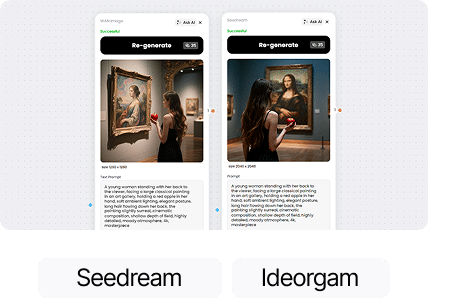

Try Seedream or Ideogram right alongside it

No copy-pasting files between services.

WAN Image goes beyond standard text-to-image models by focusing on photorealism and natural visual behavior. Instead of simply rendering descriptions, it interprets scenes as real environments — understanding how light interacts with materials, how objects relate in space, and how depth and perspective work together. Even with short prompts, the model can infer missing details and produce coherent, believable results — closer to real photography. This makes it especially powerful for product visuals, lifestyle imagery, and scenarios where realism and accuracy are critical.

WAN Image enables targeted updates without disrupting the rest of the image. Adjust objects, lighting, or materials while preserving composition, depth, and realism. The model maintains visual consistency, reducing unwanted changes and speeding up iteration cycles. This results in cleaner outputs, fewer manual corrections, and faster creative workflows.

Discover the best-performing AI models in one place — generate images and video, enhance quality, and build faster creative workflows without switching tools.

Wan Image is an AI image generation model developed by Alibaba, available in versions 2.2 and 2.5. It supports a wide range of visual styles — from photorealistic to illustrated and stylized — with strong multilingual text rendering. On Phygital+, it’s available as part of a full AI workspace that includes the Wan Video model from the same family.

WAN 2.5 is a proprietary API model focused on image quality and speed — generating images up to 1440×1440 in ~10 seconds with optional prompt expansion. WAN 2.2 is the open-source version that runs locally on consumer GPUs, better suited for self-hosted setups. On Phygital+, WAN 2.5 is the default — no local setup needed.

WAN 2.5 handles a wide range — photorealistic photography, cinematic landscapes, concept art, sci-fi and cyberpunk aesthetics, fantasy characters, and abstract compositions. It supports prompts up to 2000 characters in English and Chinese, making it well-suited for detailed scene descriptions.

WAN 2.5 accepts prompts in English and Chinese. For text rendered inside generated images — labels, signs, UI elements — it works best with short strings in clear visual contexts. For precise in-image typography across multiple languages, GPT Image or Nano Banana 2 tend to perform better.

WAN 2.5 supports outputs up to 1440×1440 pixels with aspect ratios from 1:4 to 4:1. You can generate up to 4 variations per request. On Phygital+, format and aspect ratio are configurable directly in the interface.

Unlike single-purpose AI tools, Phygital+ connects 30+ models (like Flux, Recraft, Runway, and GPT-Image) in one workspace. You can chain them into workflows — for example, generate product photo → upscale → add background → create banner — and save that pipeline to reuse anytime.

Yes. WAN 2.5 supports image-to-image mode — provide a reference photo alongside your prompt to guide the output. Useful for style transfers, generating variations, and refining compositions while preserving the original structure.

WAN Image is included in your Phygital+ subscription — no per-generation fees. You get access to WAN 2.5 alongside 30+ other AI models under one plan. See the pricing page for current options.

Join content makers using Phygital+, every tool you need in one place.