When I first started looking at Veo 3.1 seriously, what stood out to me was not only the image quality. It was the fact that Google was clearly pushing it beyond silent clip generation.

Veo 3.1 feels different because it treats video more like an audiovisual scene. You are not only describing what the camera should see. You are often describing what the scene should sound like, how it should move, and how one shot should connect to the next.

That is why I would not describe Veo 3.1 as just another text-to-video model. I think of it more like a soundstage director. It is strongest when you give it a full scene to stage: subject, movement, environment, camera language, mood, and sometimes even dialogue or background sound.

In this guide I want to focus on four practical questions:

- which Veo 3.1 version to use and why

- which controls actually matter in a real workflow

- how to prompt Veo 3.1 without fighting the model

- how to use Veo inside a more repeatable pipeline in Phygital+

Veo 3.1 versions compared: Veo 3.1 vs Fast vs Lite

The Veo 3.1 family makes more sense once you stop asking for one universal “best” model.

As of April 2026, Google positions the current lineup like this on Vertex AI:

Veo 3.1for the highest visual fidelity and final production qualityVeo 3.1 Fastfor faster generation with high qualityVeo 3.1 Litefor lower-cost, high-volume iteration

That distinction matters because the models are not only priced differently. They also fit different stages of the workflow.

Here is the simplest practical way I would frame them:

| Version | Best use | Why I would use it |

|---|---|---|

Veo 3.1 | premium final shots | best when the clip itself needs to look like the strongest possible output |

Veo 3.1 Fast | standard production workflows | good when I want speed without dropping too far in quality |

Veo 3.1 Lite | high-volume testing and scaling | best for rapid branching, cheaper prompt iteration, and production systems that need volume |

Google’s model docs also separate the current GA models from older preview IDs. The important practical point is this: if you are building now, use the current Veo 3.1, Veo 3.1 Fast, and Veo 3.1 Lite generation models, not the older preview endpoints that were phased out.

My working rule

I would use the lineup like this:

- start in

Litewhen I need many prompt branches cheaply - move to

Fastwhen the idea is clearer and I want stronger iterations - move to

Veo 3.1when the shot is close to final and visual fidelity matters more than speed

That is a much better way to use Veo than throwing your highest-cost model at every first draft.

Veo 3.1 pricing in Phygital: what matters in practice

For the blog version, I would give pricing in Phygital credits, not in Vertex AI dollars.

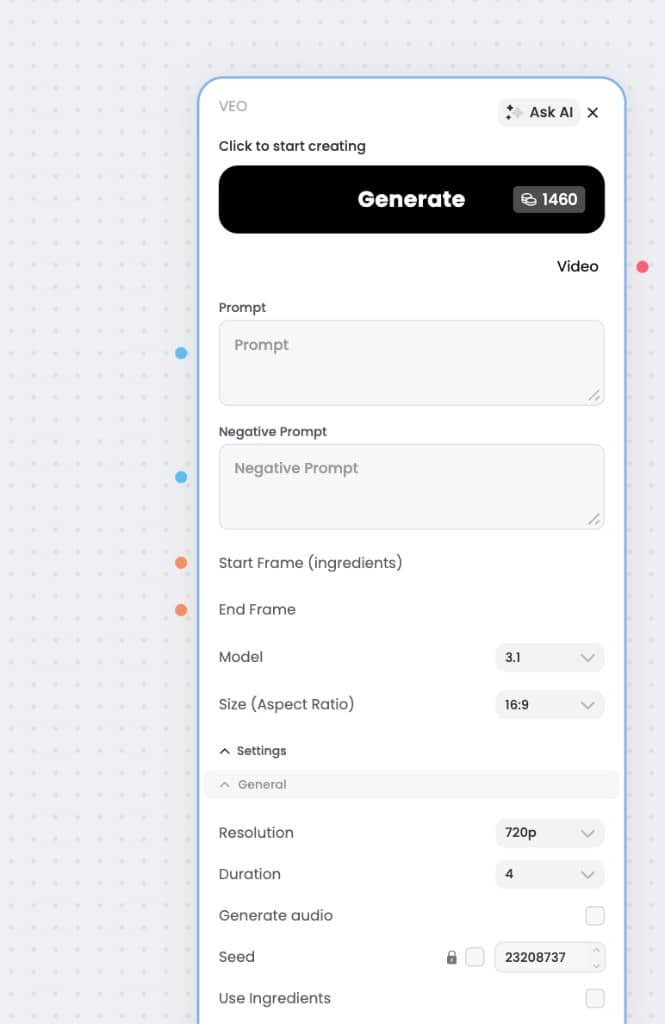

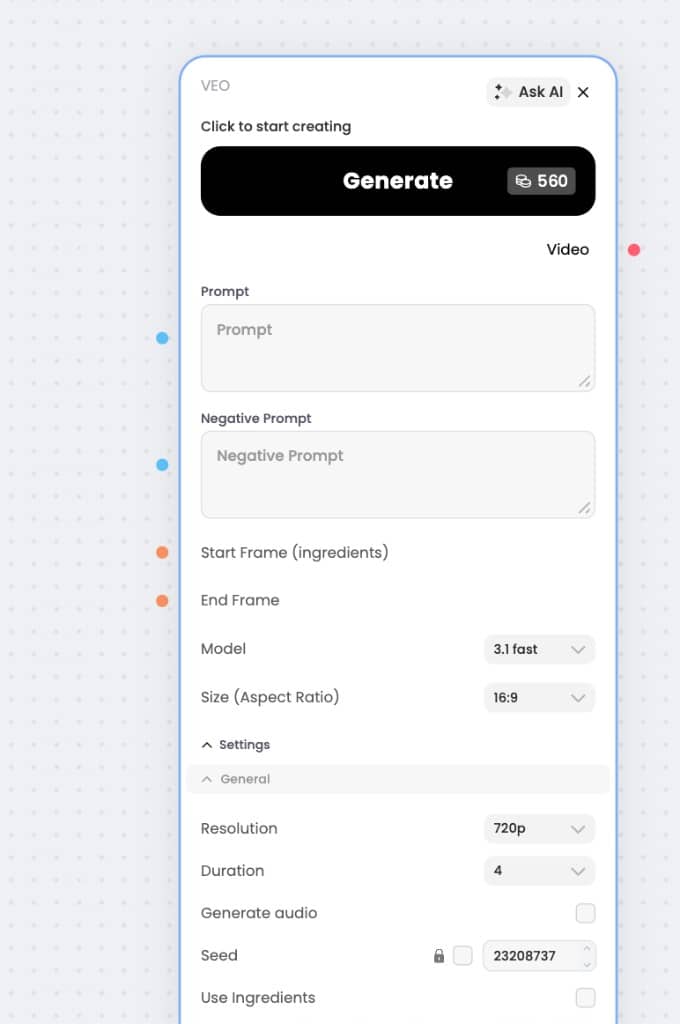

Based on the current Phygital UI screenshots checked on April 16, 2026, the visible cost for the same setup is:

Veo 3.1=1460 creditsVeo 3.1 Fast=560 credits

These screenshots show the same practical settings:

16:9720p4-seconddurationGenerate audioturned off

That is the most useful comparison for a reader because it reflects the real choice inside the Phygital workspace, not an abstract API billing table.

The practical conclusion is simple:

Veo 3.1 Fastis the cheaper branching layerVeo 3.1is the premium finishing layer

For this exact setup, Veo 3.1 costs a little more than 2.5x the credits of Veo 3.1 Fast, so I would not use it for early exploration unless I already knew the shot direction was strong.

I would keep Lite in the version comparison as the lower-cost family tier from Google’s official model lineup, but I would avoid quoting a Phygital credit number for it in the article until we have a verified in-product screenshot for that mode too.

What Veo 3.1 officially supports

This is the part that matters most before you start promising things to yourself that the model or the surface may not fully support.

According to Google’s official Vertex AI docs, Veo 3.1 supports:

- text-to-video

- image-to-video

- prompt rewriting

- reference asset images

- extend video

- first-and-last-frame generation

- 9:16 and 16:9 output

- 4, 6, or 8 second clips

- up to 4 outputs per prompt

- 24 FPS

There are also a few practical constraints worth remembering:

- reference image-to-video only supports

8 seconds - image-to-video input images can be up to

20 MB 4ksupport is still marked as preview in Vertex AI docs- support can differ depending on whether you are using Vertex AI, Gemini API, or Flow

That last point matters a lot. Veo is not one single interface. The model family is shared, but some controls arrive in one surface earlier than another or are exposed in slightly different ways.

How to use Veo 3.1 features without overcomplicating the workflow

The easiest mistake with Veo is trying to use every control at once.

The better approach is to ask: what problem am I solving in this shot?

Text-to-video

This is the cleanest starting point when the idea is still fluid.

Use it when:

- you are exploring concepts

- you want to test mood, action, or camera language

- you do not yet have a strong visual anchor

Why it matters:

This is the fastest way to learn how the model interprets your scene logic before you add more constraints.

Image-to-video

This is where Veo becomes much more reliable for commercial work.

Use it when:

- the opening frame matters

- the composition already exists

- brand, product, or character continuity matters

Why it matters:

Once the first frame is anchored, the generation stops feeling like a total re-invention every time.

Reference asset images / ingredients

Google’s official docs and Flow help pages make this one of the most important practical features.

Use it when:

- the same character must stay recognizable across multiple clips

- one object or product needs to remain stable

- you want several shots to share the same visual identity

Why it matters:

Prompt wording alone is often not enough for consistency. Reference assets give the model something concrete to preserve.

First and last frame

This is one of the best storytelling features in the whole Veo stack.

Use it when:

- you want a controlled transition between two images

- you want to animate between two planned points of view

- you need a specific beginning and ending composition

Why it matters:

This is much more controllable than hoping a freeform prompt lands on the transition you imagined.

Extend

Extend is the feature that turns short clip generation into sequencing.

Use it when:

- one shot is close, but too short

- you want to continue a motion beat

- you want to build longer audiovisual continuity from existing clips

Why it matters:

It helps Veo become part of a scene-building workflow instead of a one-shot toy.

Native audio

This is the feature that changes how you prompt Veo.

Use it when:

- dialogue matters

- sound effects matter

- ambient atmosphere is part of the scene itself

Why it matters:

You are not only directing visuals anymore. You are staging an audiovisual moment.

Veo 3.1 technical notes that are actually useful

Some technical details are worth surfacing because they change how I would work.

According to the official Vertex AI model docs:

Veo 3.1andVeo 3.1 Fastsupport text and image inputVeo 3.1 Liteis currently preview and also documented for text and image workflows in the model family docs, but has fewer supported control features than the higher tiers- output video format is

MP4 - framerate is

24 FPS - output count can go up to

4 videosper prompt

According to Flow help:

- some features are model-specific inside Flow

Ingredients to Videois supported inVeo 3.1 Fast, but not inVeo 3.1 QualityExtendis landscape-only in Flow- unsupported feature combinations can trigger a “switching you to a compatible model” message

This is exactly why Veo should be treated as a family of workflows, not a single checkbox list.

Prompting theory for Veo 3.1: think like a soundstage director

Kling often rewards camera-direction thinking. Hailuo often rewards performance-first thinking. Runway often rewards edit-intent thinking.

Veo 3.1 rewards full-scene staging.

That means a good Veo prompt usually answers:

- what the camera is looking at

- what the subject is doing

- what the environment contributes

- what the scene should feel like visually

- what the scene should sound like

Google’s official prompting guide suggests a five-part formula:

[Cinematography] + [Subject] + [Action] + [Context] + [Style & Ambiance]

That is already useful, but for real work I would extend it into a practical six-part prompt structure:

| Subject | Action | Scene | Camera | Lighting | Audio |

|---|---|---|---|---|---|

| who or what is on screen | what happens physically | where it happens | how the shot is seen | what shapes the mood visually | dialogue, ambient sound, or SFX |

This extra Audio column matters because Veo is one of the few mainstream video models where sound can no longer be treated like an afterthought.

The most useful prompting habits

I would use these rules:

- start with the camera language, not just the subject

- describe sound deliberately instead of leaving it vague

- avoid contradicting your image references with your text prompt

- use references when consistency matters more than improvisation

- use first-and-last-frame when you need a specific transformation

A simple Veo prompt formula I would actually use

[Shot type / movement] + [main subject] + [precise action] + [environment] + [lighting / mood] + [sound design or dialogue]

Example:

Medium tracking shot, a woman in a silver raincoat moving through a neon market alley, glancing back over her shoulder as paper lanterns sway above her. Wet pavement, crowded night market, reflective surfaces, cool magenta-blue lighting, atmospheric cyberpunk realism. Ambient sound: distant chatter, rain, scooter hum.

That is much more useful than simply writing “a cool cyberpunk woman walking in the rain.”

21 Veo 3.1 prompt examples

These prompts are original examples written in the logic of Google’s official Veo prompting guidance, but not copied from official examples.

The two Veo generations below were made from prompts in this guide, so the reader can see what the model looks like in practice inside the article itself.

Cinematic example

Generated from the boxer prompt: low-angle morning run, pale blue haze, gritty realism.

Product ad example

Generated from the skincare serum prompt: bright editorial tabletop motion with a premium beauty-ad feel.

Cinematic

Product ad

Social media

Music video

Lifestyle

Fantasy

Advanced control / transitions

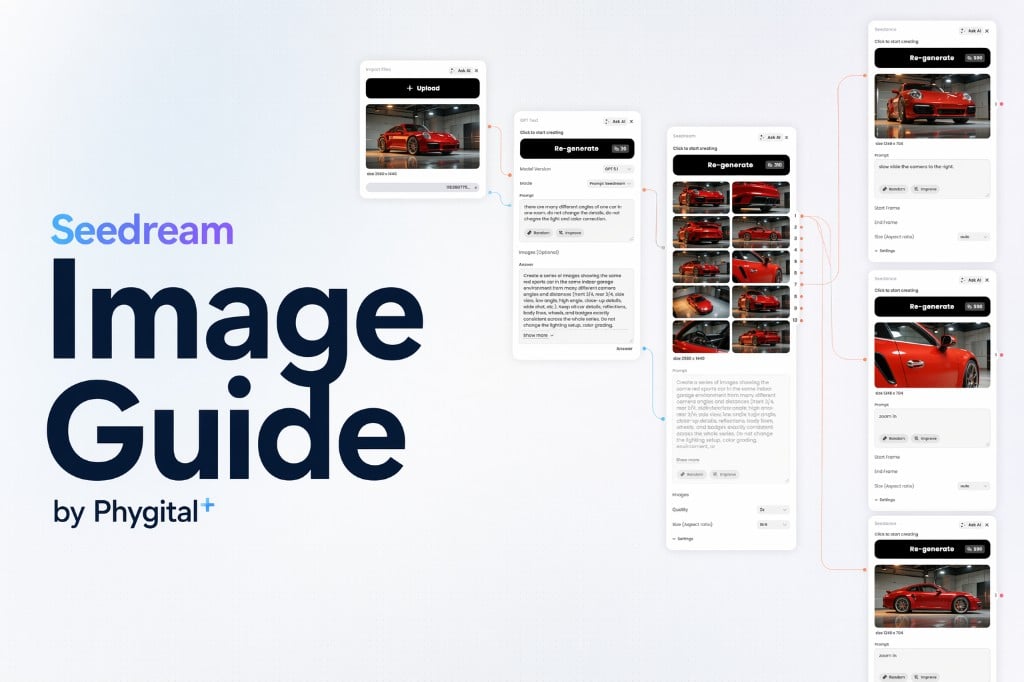

How to build a Veo 3.1 workflow in Phygital+

Veo becomes much more useful when it is treated as one layer in a bigger workflow rather than as the entire workflow.

In practice, I would use Phygital+ like this:

- Start with a concept image, storyboard frame, or clean prompt concept.

- Branch into

Veo 3.1 LiteorFastfor several motion directions. - Keep only the branch where subject, motion, and audio logic actually work together.

- Add references if the same character or product must survive into the next shot.

- Use first-and-last-frame or extend when the scene needs continuity instead of another disconnected clip.

- Move the most promising branch into

Veo 3.1for the strongest final output.

That matters because the creative problem is usually not “generate one random clip.”

Usually the problem is closer to:

- keep this character stable

- test three motion directions

- preserve the same mood across several clips

- connect one shot to the next

- avoid restarting from zero every time

That is exactly where a node-based workspace helps. Instead of bouncing between tabs, lost prompts, and disconnected reference files, you can keep generation, branching, comparison, and refinement inside one visual pipeline.

[Screenshot placeholder: Phygital+ workflow with Veo branches, reference images, and final selected output]

This is the part people often underestimate. Veo is powerful, but the real creative advantage comes from how you organize the iterations around it.

A practical Veo 3.1 workflow for consistency

If I needed one character to stay recognizable across several clips, I would not rely on text alone.

I would do this instead:

- Generate or prepare a strong anchor image of the character.

- Create 2-3 Veo branches from that same starting point.

- Compare which branch preserves identity, costume, and mood best.

- Save the winning references and reuse them for the next shot.

- Use first-and-last-frame only when the transition itself matters.

That kind of workflow is much more stable than rewriting the entire character from scratch in every prompt.

It is also where Phygital+ fits naturally. The value is not “Veo inside a box.” The value is seeing the whole decision tree clearly.

FAQ

Why is first-and-last-frame not working the way I expect?

In Google’s official model docs, some frame-controlled workflows have narrower constraints than normal text-to-video. For example, reference image-to-video is documented as 8 seconds only. In API workflows, feature support can also depend on the surface, model ID, and current SDK version.

Why did Flow switch me to a compatible model?

Because Flow does not expose every feature in every Veo mode. Google’s Flow help docs explicitly say that unsupported feature combinations can trigger a switch to a compatible model.

Why are my ingredients not keeping the character consistent?

Google’s Flow guidance warns against conflicting instructions between prompt and visual references. It also recommends clean subject or product references on plain or segmented backgrounds. If the references are noisy or your text contradicts them, consistency usually drops.

Why is audio behaving strangely in some generations?

Because audio generation is still an actively improving part of the Veo experience. Google’s Flow help pages specifically note known issues, including muted speech when minors appear and on-screen subtitles triggering incorrectly in some speech generations.

Should I use Veo 3.1 Quality or Fast in Flow?

Use Quality when the final clip matters more than flexibility. Use Fast when you need more iteration speed or a feature combination that is only available there. Flow’s own feature matrix currently shows that some controls, like Ingredients to Video, are not exposed identically across the two.

When should I use Lite instead of Fast?

Use Lite when the goal is broad iteration, cheaper testing, or high-volume generation. Use Fast when you still care about speed, but want a stronger working-quality layer before moving to the flagship model.

Is Veo better for free prompting or controlled workflows?

It can do both, but I think Veo becomes much more valuable in controlled workflows. The moment you need continuity, references, staged audio, or transitions between shots, the model benefits from a pipeline mindset.

Explore more

ChatGPT Image 2.0 guide: how to prompt like a designer after the April 2026 update

Learn what changed in ChatGPT Image 2.0 after the April 2026 update, how to prompt it like a designer, and how to use its new text, layout, and editing controls in real workflows.

Seedream 5.0 image guide: how to create better posters, edits, and structured visuals in Phygital+

Learn how to use Seedream 5.0 for posters, infographics, editing, and multi-reference workflows in Phygital+. Compares 5.0 vs 4.5, prompt examples, and 40-credit pricing.

Long-Form vs Short-Form Video Content: What Works Best in 2026?

Explore the difference between long-form and short-form video content and find out where each format fits best.

Why AI-generated videos still need post-production

AI video generation can create impressive first drafts, but post-production still matters. A practical guide to cleanup, pacing, audio, captions, reframing, and publish-ready workflows.

Runway AI prompts guide: how to create cinematic videos and edit shots with more control

Learn how to use Runway for AI video generation and editing with Gen-4.5, Gen-4 Turbo, and Aleph. A practical guide to prompt structure, version differences, edit workflows, and cinematic prompt examples for creators and marketing teams.

Hailuo AI prompts guide: how to create expressive videos and keep one character consistent

Learn how to use Hailuo for expressive AI video generation, image-to-video workflows, and character consistency. A practical guide to prompts, version differences, start and end frames, subject control, and repeatable pipelines in Phygital+.