AI video tools have become much better at making impressive first drafts. A single prompt can now produce motion, camera shifts, stylized scenes, and even shots that feel close to a finished ad or music visual.

But that is exactly where many creators get stuck. The video looks good enough to be exciting, yet not polished enough to publish.

That gap matters more than people expect. In real workflows, the generation step is only one part of the process. The final result still needs cleanup, timing decisions, format adjustments, and often some very practical fixes before it works for social media, brand content, or paid campaigns.

In other words: AI can generate a shot, but post-production still turns it into content.

Why generation is not the final step

Most AI video tools are optimized to produce interesting motion, not perfect deliverables.

A generated clip can be visually strong and still fail in small but important ways. The pacing may feel off. The framing may not fit the platform. The sound may be unusable. Text overlays may be missing. Cuts may feel abrupt. A scene may be beautiful, but still not work as a publish-ready asset.

That is why post-production still matters. It is the stage where raw generation becomes communication.

For creators, that means a video becomes easier to watch. For brands, it means the message becomes clearer. For teams, it means the asset becomes usable across multiple channels instead of remaining just an interesting experiment.

Common problems in AI-generated videos

The most common issue is not that AI video looks bad. It is that it looks unfinished.

Some clips are visually strong but slightly too long. Others have good motion, but the important moment comes too late. Some need reframing for vertical platforms. Some need subtitles. Some need cleanup because the atmosphere works, but the final output still feels rough.

A few recurring problems show up again and again:

- weak or noisy audio

- awkward pacing

- mismatched aspect ratio

- lack of captions or voiceover

- rough cuts between clips

- beautiful visuals with no clear editorial finish

None of these problems are dramatic on their own. Together, they are usually the difference between “interesting output” and “publishable content.”

The two Runway examples below show exactly that middle zone. Both clips are visually strong, but they also show why generation still benefits from editing choices before publishing.

Forest Wanderer

A moody fantasy clip that already looks cinematic, but still benefits from post-production decisions like pacing, crop, captions, and soundtrack.

Stop-Motion Fox

A stylized Runway result that feels strong visually, yet still needs editorial polish before it becomes final publish-ready content.

Cleaning the audio and removing background noise

Audio is one of the easiest places to lose quality fast.

Even when the visual side works, the soundtrack often needs help. There may be room tone, hiss, ambient clutter, inconsistent levels, or distracting background sound that makes the whole clip feel less professional.

That is especially true when AI video is combined with recorded voiceover, stock sound, or quick creator-style edits. In those cases, audio cleanup becomes one of the fastest ways to improve the final result.

If a clip already works visually but the sound is messy, a tool to remove background noise from video can help clean it before subtitles, voiceover, or final export.

This is not the glamorous part of the process, but it is one of the highest-leverage ones. Better sound instantly makes AI-generated content feel more intentional.

Fixing pacing, cuts, and timing

A lot of AI clips fail because they are not edited, not because they are badly generated.

Sometimes the first second is weak and should be trimmed. Sometimes the motion peaks too late. Sometimes two good clips do not work together until the cut point changes. Sometimes a five-second generation only contains two seconds you actually need.

That is normal.

Post-production is where you decide:

- where the shot should really begin

- where it should end

- which beat deserves emphasis

- which parts slow the viewer down

- whether the clip should stand alone or work as part of a sequence

This is where timing becomes more important than prompt quality. A strong edit can make an average generation feel sharper. A weak edit can make a strong generation feel random.

Adding subtitles, captions, and voiceover

Most published video content does not rely on visuals alone.

If the clip is going to social media, subtitles often matter immediately. If it is going into an ad, a voiceover or on-screen message may be necessary. If it is for a landing page or presentation, the viewer may need context fast.

AI generation rarely solves that editorial layer by itself.

Post-production is where you add:

- subtitles for silent viewing

- captions for clarity

- simple titles and labels

- voiceover timing

- sound design accents

- a clearer communication structure

This is especially important for creators and marketers. A visually impressive clip can still underperform if the message arrives too late or never becomes explicit.

Reframing for TikTok, Reels, YouTube, and ads

One generated clip usually needs more than one version.

A horizontal cinematic shot may look great on desktop and fail on vertical mobile. A vertical social cut may not work at all in a presentation or ad deck. A composition that feels balanced in 16:9 may lose the subject entirely in 9:16.

That is why reframing is still part of the workflow.

Post-production is where you adapt the same source into:

- vertical for Reels and TikTok

- horizontal for YouTube and websites

- square for certain paid social formats

- tighter crops for mobile-first viewing

This step is not just technical. It affects where the eye goes and how clearly the content reads in context.

Why AI video works better with a pipeline mindset

The biggest shift in 2026 is not that AI can generate more video. It is that creators now need a cleaner way to move from idea to final asset.

That is why a pipeline mindset matters more than a single-tool mindset.

A practical workflow usually looks more like this:

- Generate the first visual or shot.

- Compare several variations.

- Pick the best direction.

- Clean the audio.

- Trim and pace the clip.

- Add captions or voiceover.

- Reframe for the target platform.

- Export the final version.

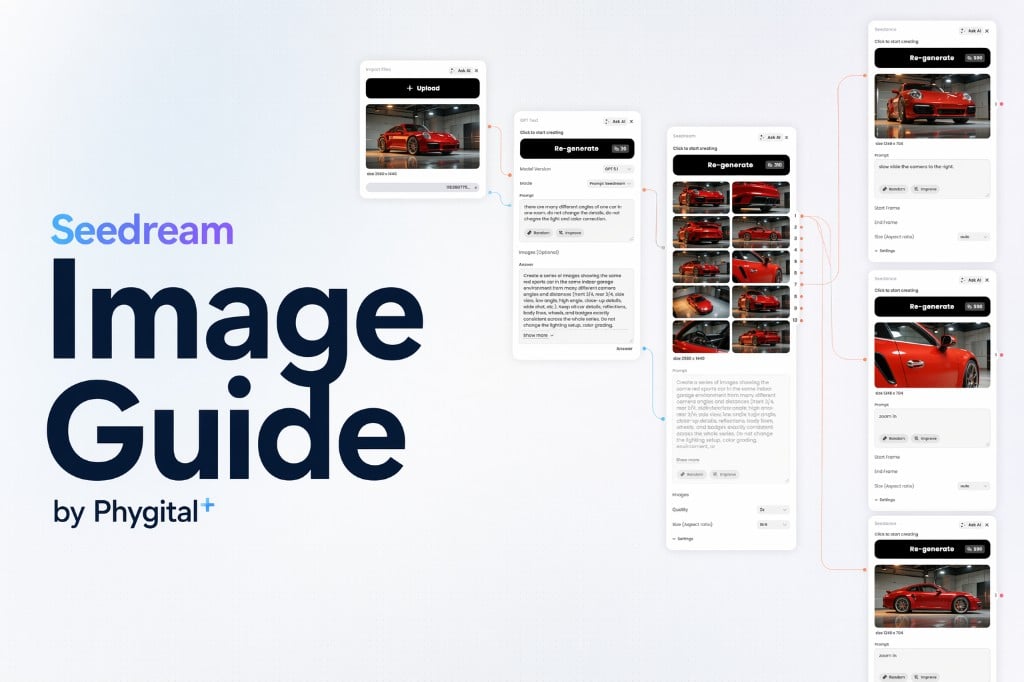

This is where Phygital+ fits naturally. Phygital+ is a node-based AI platform for designers and creators, built to make that kind of multi-step process easier to manage. Instead of jumping between isolated tools and tabs, you can treat generation, variation, comparison, and refinement as one connected visual workflow.

The point is not to make AI do everything. The point is to keep control over the whole chain.

A simple post-production workflow in Phygital+

In practice, I would use a workflow like this:

- Generate several video variations from one concept.

- Compare branches side by side.

- Pick the strongest visual direction.

- Clean the audio if needed.

- Trim the sequence to the useful moment.

- Add subtitles or messaging.

- Export versions for social, ads, or presentation.

That kind of workflow is much more realistic than expecting one prompt to solve everything at once.

The generation model gives you raw visual material. Post-production gives it clarity. The workflow gives it consistency.

FAQ

Why is my AI-generated video still not ready to publish?

Because generation and post-production solve different problems. Generation creates the raw material. Post-production makes it watchable, clear, and usable in a real content format.

Do AI videos always need editing?

Not always, but usually yes. Even strong generations often benefit from trimming, reframing, subtitle work, or audio cleanup before publication.

What is the most common problem after AI video generation?

Usually pacing. A clip may look good, but the important action happens too late, too briefly, or without enough editorial clarity.

Why does audio matter so much if the visuals are strong?

Because audiences judge polish very quickly. Even a strong visual can feel amateur if the sound is distracting, unclear, or noisy.

Should I make one final version or several?

Usually several. One clip often needs different crops, timing, and overlays for TikTok, Reels, YouTube, paid ads, or landing pages.

Where does Phygital+ help in this process?

Phygital+ helps when the work is no longer just “generate one clip,” but “build a repeatable workflow from idea to final export.” That is where a node-based setup becomes more useful than a pile of disconnected tools.