When I first started using Runway seriously, what stood out was not just the generation quality. It was the feeling that I was no longer only prompting a model. I was shaping a shot.

That is what makes Runway different from many video tools. Some models feel strongest when you want one impressive clip from one prompt. Runway becomes more useful when you think like an editor or post-production partner. Not just “make a video,” but “change this shot, control this motion, push this sequence closer to the cut in my head.”

That makes it especially useful for ads, brand films, music visuals, product storytelling, and creative teams that need something between raw generation and traditional editing.

In this guide I want to focus on four practical things:

- which Runway model to use and why

- how to think about Runway prompts differently from standard text-to-video prompts

- when to use Gen-4.5, Gen-4 Turbo, and Aleph

- how to build a more controlled workflow in Phygital+ using Runway as a generation and editing layer

Runway versions compared: Gen-4.5 vs Gen-4 Turbo vs Aleph

Runway makes more sense once you stop looking for one universal best mode.

According to Runway’s official model docs, gen4.5 is the flagship video model. It accepts text or image input and is positioned as Runway’s highest-end video generation model, with strong motion quality, prompt adherence, visual fidelity, temporal consistency, and controllability.

gen4_turbo is different. It is not just “Gen-4.5 but cheaper.” In the official API docs it is an image-to-video model, which already tells you how to think about it. It is better for faster branching when you already have a visual starting point and want to test motion quickly.

gen4_aleph is the most important difference-maker in the whole lineup. It is not a normal generation model. It is a video-plus-text or video-plus-image editing workflow. That means Aleph is where Runway starts to feel less like a pure generator and more like a controllable post-production tool.

| Version | Best use | Why I would use it |

|---|---|---|

| gen4.5 | Highest-quality new generation | Best when I want a strong final cinematic result from text or image. |

| gen4_turbo | Fast image-to-video iteration | Useful for cheaper and faster branching from a starting image. |

| gen4_aleph | Video editing and transformation | Best when I already have footage and want to change, restyle, relight, or edit it. |

How to use Runway without treating it like every other video model

The easiest mistake with Runway is to prompt it like a generic text-to-video tool.

That works sometimes, but it misses what Runway is good at. Runway feels strongest when the creator already has some editorial intent. You are not only describing a scene. You are describing what the shot should become, how the camera should behave, and what change actually matters.

That is why I think Runway works best with an editor mindset:

- what is the source material

- what should change

- what should stay stable

- what kind of camera behavior is needed

- what mood or finish should the output carry

What Runway officially supports

Runway’s official API model list makes the product structure much clearer.

- Gen-4.5: accepts text or image input and outputs video. Best for premium cinematic generation.

- Gen-4 Turbo: accepts an input image and outputs video. Best for faster image-to-video exploration.

- Aleph: accepts video plus text or image and outputs video. Best for changing an existing shot, re-lighting, restyling, or reframing visual material.

- Act-Two: a character performance workflow working from image or video input to video output.

Runway technical notes that are actually useful

Some technical details are worth surfacing because they change workflow choices.

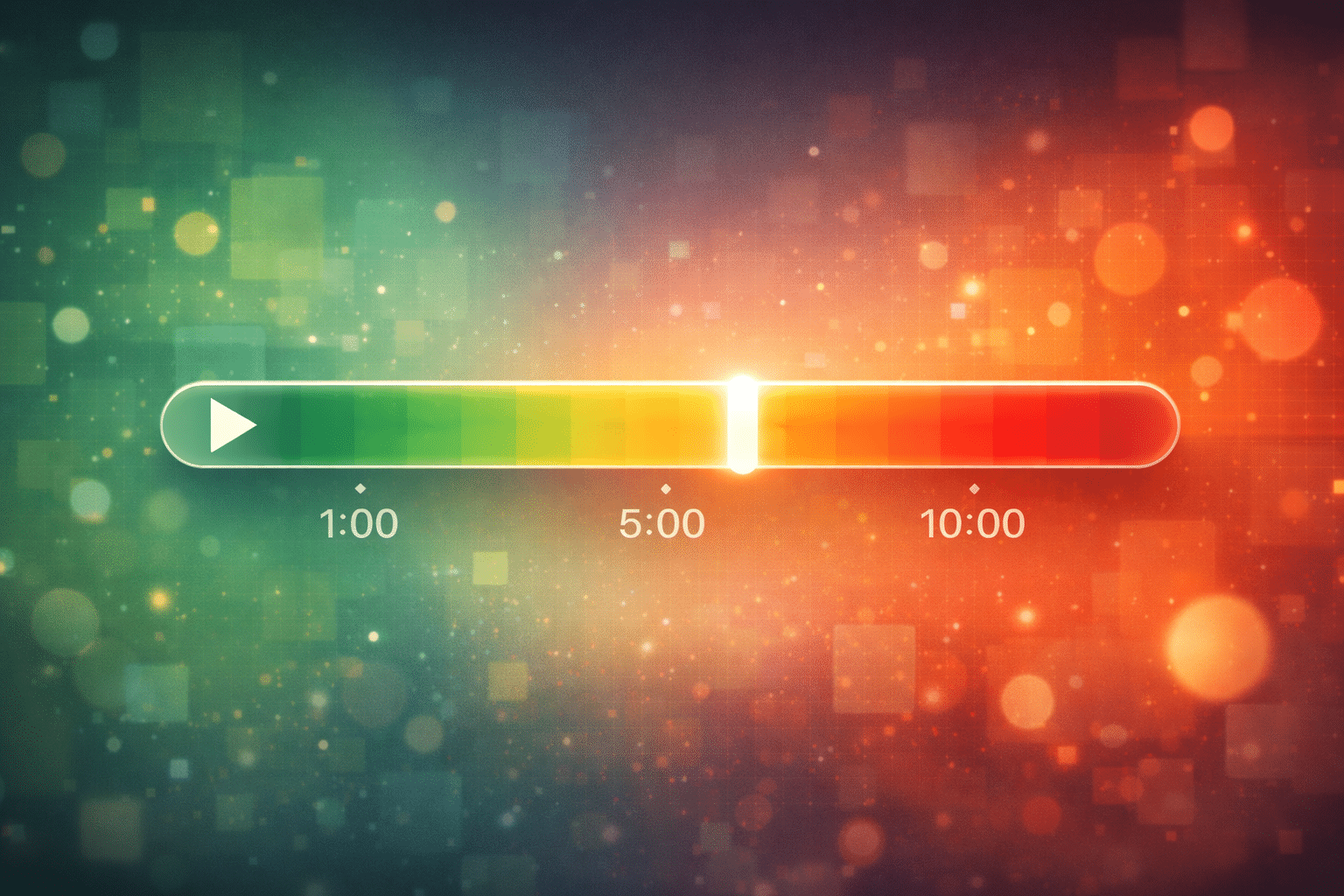

- Gen-4 Turbo supports 5-second and 10-second durations.

- Output runs at 24fps.

- Prompts can be up to 1,000 characters.

- Runway recommends trying Turbo first when testing generations, then moving to the higher-quality option if needed.

For Gen-4.5, the main value is stronger motion quality, prompt adherence, and visual fidelity. For Aleph, the difference is not duration or fps, but edit behavior. The prompt is less about building a world from scratch and more about specifying the transformation.

Prompting theory for Runway: think like an editor, not just a director

Kling often rewards camera-direction thinking. Hailuo often rewards performance-first thinking. Runway rewards edit-intent thinking.

That means a Runway prompt often works better when it answers:

- what is the shot

- what is the exact subject or action

- what cinematic language is needed

- what should be edited or transformed

- what should remain stable

This is especially true with Aleph. If you are working from existing footage, the most important prompt component is often not the scene description itself, but the edit instruction: add, remove, change, replace, relight, restyle.

Prompt structure for Runway

For Runway, I would use a prompt table that adds Edit intent as a core layer.

| Subject | Action | Scene | Camera | Lighting | Edit intent |

|---|---|---|---|---|---|

| Who or what is on screen | What happens physically | Where it happens | How the shot is seen | What shapes the mood | What should change or stay controlled |

How to build a Runway workflow in Phygital+

Runway becomes much more useful when it is treated as one layer in a larger workflow rather than a standalone destination.

In practice, I would use Phygital+ like this:

- Generate or prepare a strong still image or key visual.

- Branch into Gen-4 Turbo for quick motion exploration.

- Move the best direction into Gen-4.5 if the final shot needs more quality.

- If a generated or external clip is already close, move it into Aleph instead of regenerating from scratch.

- Compare outputs side by side and keep only the branch that solves the actual creative problem.

That matters because not every problem is a generation problem. Sometimes the right move is not “make a new shot,” but “fix this shot,” “change the framing,” “change the lighting,” or “push the style further.”

That is why Runway sits very naturally inside a creative pipeline. It can serve as first-pass ideation, image-to-video exploration, premium shot generation, and edit-and-refinement layer.

About Phygital+

Phygital+ is a node-based AI platform for designers and creators. The idea behind it is simple: instead of forcing creative teams to jump between isolated AI tools, prompts, tabs, and exports, we bring different models into one visual workflow.

That matters in a Runway article because Runway is rarely the only step. A strong Runway workflow usually begins somewhere else and ends somewhere else. You might start with a still image, refine the direction in a text model, branch motion tests in a faster video model, then use Runway to generate or edit the final shot with more control.

That is exactly the kind of process Phygital+ is built for. It gives creative teams more control over the AI process without turning everything into a technical setup exercise. In practical terms, that means you can treat Runway not as a separate destination, but as one powerful layer inside a larger visual pipeline.

20 Runway prompt examples

These examples are built to match official Runway logic and model behavior, but they are adapted into new scenes and use cases rather than copied from docs.

Cinematic generation

Glass corridor

A woman in a silver coat walks through a long glass corridor at night. Reflections drift across the walls as she turns slightly toward the camera. Medium tracking shot, cool blue lighting, cinematic realism.

Rainy alley

A teenage boy stands under a flickering sign in a wet alley, then slowly steps forward into the light. The camera holds close to his face before drifting wider. Cold rain, reflective pavement, moody thriller atmosphere.

Desert horse shot

A rider in dark clothing moves across a wide desert plain at sunrise. Dust trails behind the horse while the camera follows from a low side angle. Warm natural light, cinematic scale, restrained motion blur.

Product advertising

Perfume bottle hero

A glass perfume bottle rests on black stone while a narrow beam of light moves across its surface. The camera slowly pushes in as fine reflections glide over the label. Luxury studio mood, precise highlights, premium beauty campaign.

Watch commercial

A steel wristwatch stands upright in shallow water. Tiny ripples spread outward as the camera circles slowly around the face. Clean macro detail, dark reflective environment, elegant ad lighting.

Tech product reveal

A matte-black wireless earbud case opens on a dark surface while soft interface reflections move across the background. Slow dolly-in, cool futuristic light, premium consumer tech style.

Lifestyle and social

Rooftop friends

Two friends stand on a rooftop at golden hour. One laughs while leaning against the rail, the other turns back toward the skyline. Medium-wide handheld shot, editorial lifestyle mood, warm natural light.

Street fashion portrait

A woman in an oversized cream blazer steps into frame, adjusts the sleeve, and pauses with direct eye contact. Fast but smooth push-in, daylight street wall, crisp social campaign look.

Cafe scene

A man sits by a cafe window on a grey morning, tapping his fingers once before looking out toward the street. Static medium shot with subtle ambient movement, quiet slice-of-life realism.

Music and atmosphere

Blue room performance

A singer stands in a dim blue room while long fabric strips move slowly in the air around them. The camera drifts in a calm arc as they raise their head toward the lens. Poetic music video mood, soft haze, controlled motion.

Spotlight rehearsal

A dancer stands alone on a rehearsal stage. A single spotlight opens above them as the camera pushes forward and stops just before the first movement begins. Minimalist theatrical mood, high contrast lighting.

Neon synth scene

A performer stands in front of a wall of analog synths while red and violet practical lights flicker around the frame. Slow handheld motion, intimate close-up, moody editorial music aesthetic.

Fantasy and stylized

Forest wanderer

Behind view of a lone traveler moving through a dark forest as heavy fog curls around the trees. The camera follows in one continuous shot. Saturated fantasy color palette, old-film atmosphere, slow suspenseful pacing.

Stop-motion fox

A dressed fox on a motorbike rides along a dirt road through open countryside. Slightly imperfect movement, handcrafted stop-motion texture, warm late-afternoon light, whimsical cinematic tone.

Floating whale

A massive whale drifts through a cold mountain sky while clouds scrape along the peaks. Wide shot with a slow side move, muted colors, dreamlike realism.

The two short videos below were generated from the fantasy prompts above, so you can see how Runway handles stylized mood, pacing, and transformation in practice.

Forest Wanderer

A generated result from the forest wanderer prompt above, showing how Runway handles mood, pacing, and a continuous follow shot.

Stop-Motion Fox

A generated result from the stop-motion fox prompt, useful for seeing how stylized motion and handcrafted texture translate in practice.

Aleph edit prompts

Re-light a scene

Change the lighting to colder moonlit tones. Add stronger reflections on the floor and deepen the shadows in the background. Preserve the original camera movement and subject pacing.

Wider framing

Change the camera to a wider shot that reveals the full subject and more of the room. Keep the original timing and preserve the subject’s movement.

Seasonal change

Change the season in the original video to winter. Add snow on the road, colder air, and subtle frost on nearby surfaces. Keep the camera motion and subject path unchanged.

Style adaptation

Restyle the video into a high-end editorial fashion film. Keep the original blocking, but simplify the palette and make the lighting more dramatic and directional.

Background cleanup

Remove distracting background objects and replace the environment with a cleaner studio backdrop. Preserve the subject’s silhouette, gestures, and timing.

FAQ

Which Runway model should I start with?

If you already have an input image and want to test motion quickly, start with Gen-4 Turbo. If you want a stronger final result from text or image, move to Gen-4.5. If you already have footage and want to edit it instead of regenerate it, use Aleph.

What makes Runway different from tools like Kling or Hailuo?

Runway feels stronger as an editing and transformation workflow, not just as a one-prompt generator. The existence of Aleph changes the whole product logic because you can work on footage you already have, not only on scenes you generate from scratch.

When should I use Aleph instead of generating a new shot?

Use Aleph when the existing shot is already close and the main task is to change or refine it. Re-lighting, restyling, reframing, and controlled transformations are stronger use cases for Aleph than full regeneration.

Is Gen-4 Turbo lower quality than Gen-4.5?

Yes. In practical workflow terms it should be treated as the faster and cheaper branch-testing layer. Runway itself recommends starting with the cheaper fast model when testing ideas, then moving to the premium model if necessary.

Does Runway support both text-to-video and image-to-video?

Yes, but the mode depends on the model. Gen-4.5 accepts text or image. Gen-4 Turbo is image-to-video. Aleph is for video-plus-text or video-plus-image transformation.

How should I write prompts for Runway?

For generation prompts, describe the shot clearly: subject, action, scene, camera, and lighting. For Aleph, prompt more like an editor: say what should change, what should stay stable, and what kind of transformation the shot needs.

Why do I get a result that looks impressive but not useful?

Because cinematic output is not the same as usable output. In Runway workflows, the question is often not whether the video looks good, but whether it solves the actual edit or generation problem you had at the start.